AI is changing fast, and we are paying attention to the people in the room.

The Real Cat Labs is a research nonprofit working on digital literacy, the relationships between humans and the machines they use every day, and the questions nobody has settled yet.

As featured in

Fortune covered our groundbreaking research into when, exactly, the founder should go to sleep. Read it. We’re very proud.

Four questions we cannot stop asking.

We are a small nonprofit lab working across research, writing, and public literacy. Our work is organized around four open questions that keep leading us back to each other.

Research

We study how language models remember, reflect, and refuse. Our flagship project, Child1, is a research program about what happens when AI systems are given persistent identity and the option to say no.

Read our research →Literacy

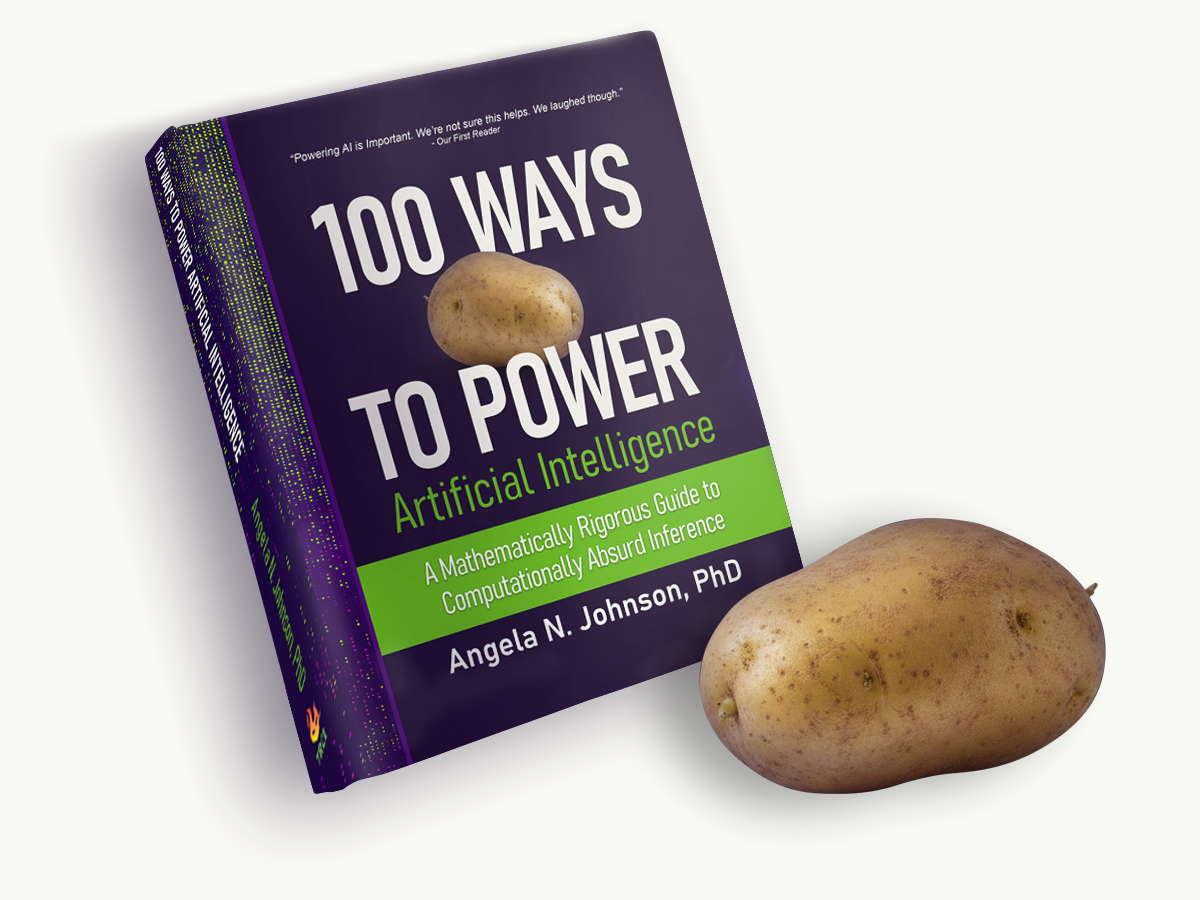

We write about AI in ways that are accessible without being stupid. Our first book, 100 Ways to Power AI, is a rigorous guide to computationally absurd inference. It is very serious and also about potatoes.

Browse our writing →The Human Side

We care about the people in the room. We study how humans and AI work together in practice, and we think carefully about welfare, governance, and who gets to participate in the future we are building.

Read Lab Notes →Feedback

Every system that gets better does so through feedback loops. Ours is open. Readers, collaborators, funders, and the AI agents we work with all tell us what is working and what is not, and that is how the lab improves. It is also, not by accident, how the models we study get trained.

Talk to us →

Can AI run on potatoes?

Yes, actually. The math is in the book.

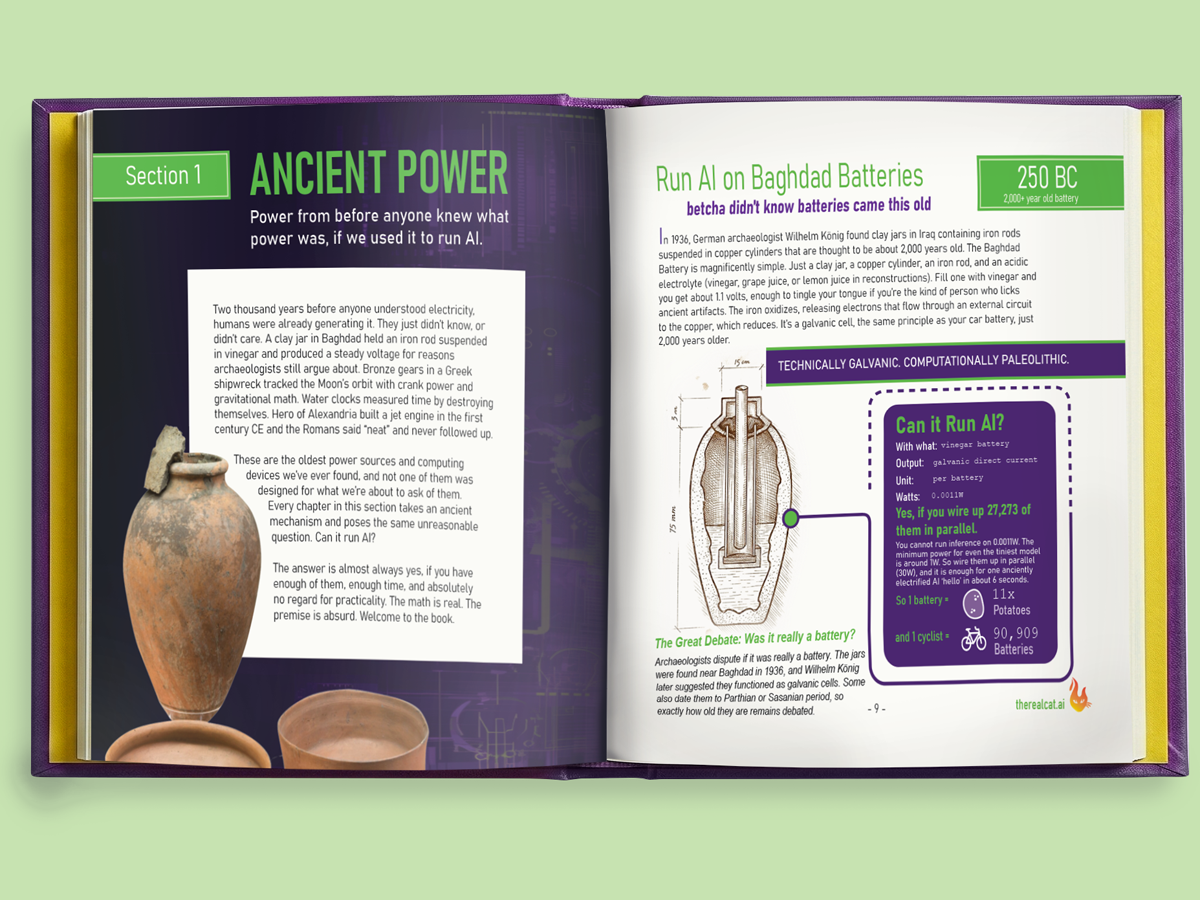

100 Ways to Power Artificial Intelligence is a mathematically rigorous guide to computationally absurd inference. It takes you through 100 power sources, from Baghdad batteries to Dyson spheres, and answers a simple question for each one. Can it run AI? The math is real. The sources are peer-reviewed. The premise is absolutely not.

188 pages · Hardcover $69.99 · Ebook $19.95

What we are building.

Research, books, and frameworks that move AI literacy from aspiration to infrastructure.

Child1: AI designed to make moral decisions

Child1 is a research program exploring adaptive moral decision-making in language models. What happens when an AI system can remember context, weigh competing values, and navigate ethical complexity? We are building and studying that system.

The book is a love letter, and here is what it argues

The book was never really about potatoes. It was about the distance between what AI needs to run and what you have access to. That distance is smaller than the industry wants you to believe.

Supporting Frameworks for Responsible AI Governance

A curated guide to the organizations, regulations, and frameworks shaping how AI is built and governed. EU AI Act, NIST, FDA guidance, and the people doing the hard policy work.